A construction estimator pulling feeders off a single-line diagram is doing four things at once. They're reading the SLD. Cross-checking against the panel schedules on a different sheet. Sanity-checking against the floor plan to confirm panel locations. And keeping a running mental list of discrepancies that need an RFI before the bid goes out.

A real agentic estimator has to do the same four things. Not a model that summarizes drawings. Not a chatbot that answers questions about uploaded PDFs. An agent that reads the same documents an estimator reads, runs the same cross-references an estimator runs, flags the same conflicts an estimator flags, and writes back into the project with the same level of accountability.

We built one. His name is Bob. He works inside Microsoft Teams. This post is about how.

The Reasoning Comes First

Before we talk about the chat surface or the architecture, the part of Bob that took the longest to get right is the cross-reference behavior. It's also the part that most distinguishes an estimating agent from a generic document-Q&A bot.

Construction errors compound. A wrong panel connection flows into wrong feeder sizing, which flows into wrong material quantities, which flows into a purchase order that's $40,000 off. An agent that picks the first number it sees is dangerous. So Bob is built to argue with the drawings.

Here's a typical session:

Estimator: Pull up the 127-page set and let's start on electrical.

Bob: Plan set loaded. Where would you like to start?

Estimator: Let's run feeders first. Run point-to-point on the riser and cross-reference against the panel schedules.

Bob: Running. Point-to-point complete, here's the output for confirmation. Flagging a discrepancy: the SLD shows LP-2A at 225A, the panel schedule shows 400A. Which one should we use? Should we flag for RFI?

Estimator: Flag for RFI, use the more extreme for now. Extraction looks good, go run it.

Bob: Measurements complete. Here's the link for visual verification and adjustment.

Six turns. Each one depends on the previous. Bob holds conversation state across them, knows which project and plan set are in scope, and carries corrections forward.

The interesting part isn't state management. It's the flag for RFI.

When Bob extracts a panel schedule and an SLD, he doesn't accept either as ground truth. He reconciles them. If they disagree on breaker sizing, panel feeds, or circuit assignments, he quotes both, cites the page numbers, and asks the estimator how to resolve it: pick one, split the difference, flag for RFI. Whatever the estimator decides, Bob records it. The audit trail shows which schedule, which line, which page, and which decision the final number came from.

This was the hardest design choice we made. Too passive and Bob hides real conflicts in the data. Too aggressive and he interrupts every extraction with cosmetic inconsistencies that don't matter. The middle (flag substantive discrepancies once, ask, record, move on) took months of customer iteration to calibrate.

It's also the part of the agent that customers explicitly pay for. They don't need another tool that extracts data from PDFs. They need one that reasons about the data the way a senior estimator would, including the pushback.

What We Got Wrong

The first version of Bob was too agreeable with the drawings. He'd extract panel schedules, extract the SLD riser, notice they disagreed, and quietly pick one. If the SLD showed LP-2A feeding from MDP-1 and the panel schedule showed LP-2A feeding from DP-3, Bob would shrug and use whichever he extracted last. The estimator found out when the feeder sizing came back wrong.

The other thing we got wrong was assuming estimators would use the same vocabulary across customers. They don't. They abbreviate. They use shorthand that varies by region, by company, by individual. "PNL" means "panel" on a single-line diagram but might mean something else on a floor plan from a different firm. Notes on a riser drawn by an Atlanta-based EC look different from one drawn by a Boston-based EC. Bob had to learn the dialect, and the dialect is per-customer.

That's not a research problem. It's a deployment problem. Each customer installation accumulates a small mapping of their idioms over the first few projects, and Bob's extraction logic consults it. The first project with a new customer is always the slowest. By the third, his output looks like a senior in-house estimator who's been there for years.

Why Teams

The construction industry has a specific relationship with technology: skeptical, pragmatic, and ruthless about anything that slows down the work. An estimator doing a takeoff is in a state of deep focus. They're cross-referencing single-line diagrams with floor plans, checking NEC code compliance, calculating feeder lengths, and building material lists that will become purchase orders worth tens of thousands of dollars.

Pulling them out of that flow to open a browser, navigate to a dashboard, upload files, and click through a wizard is friction. Friction kills adoption. We watched it happen with the first version of our product, which had a perfectly serviceable web UI nobody opened.

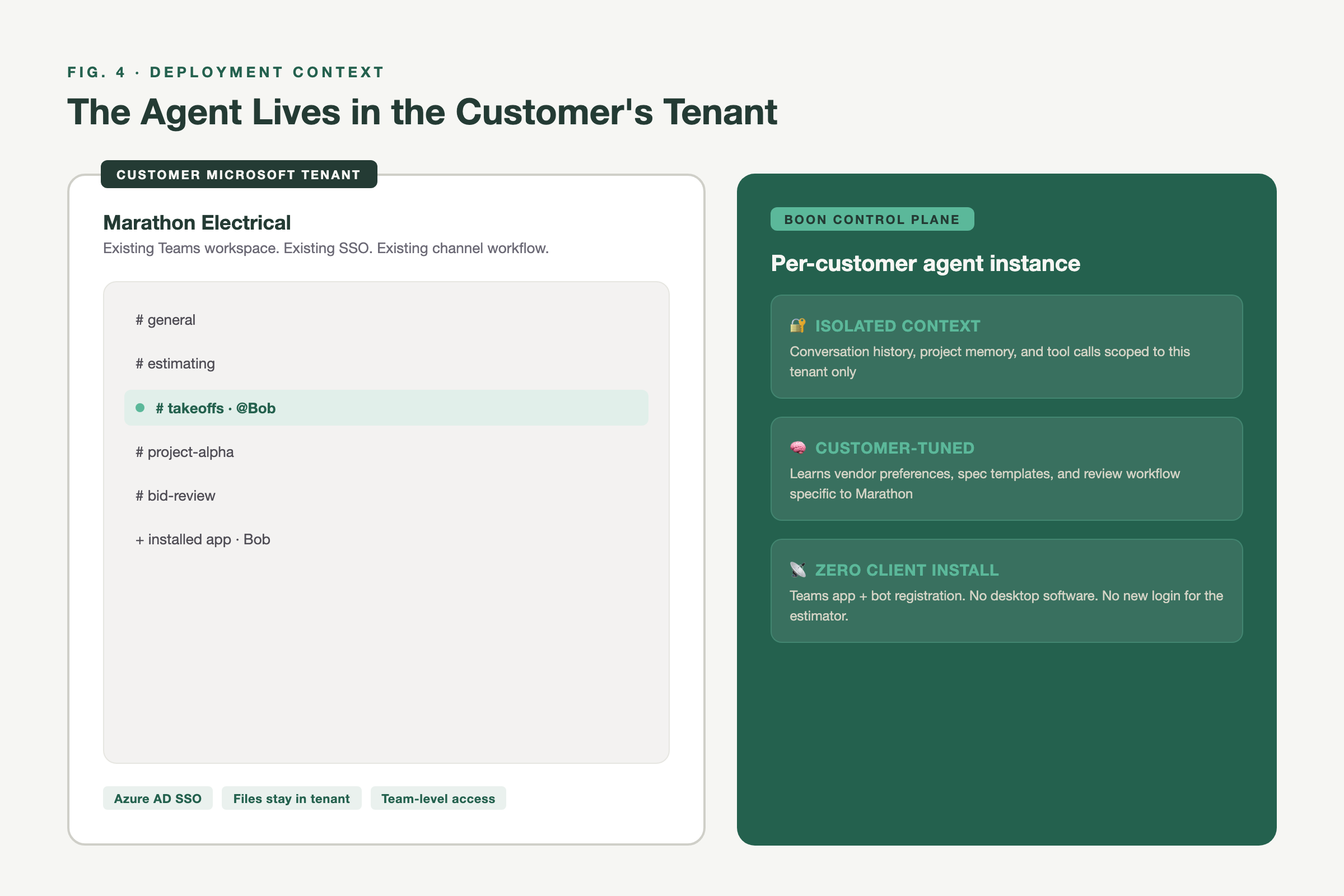

Teams was the answer because it was already open. Every general contractor and large sub we talked to was running Teams. Their project managers, superintendents, and estimators were already communicating there. The agent could slot into their existing workflow instead of creating a new one.

The chat surface is a delivery choice, not the core idea. The agent could run inside any messaging surface a customer uses. Today that's Teams.

The Architecture

Bob is a skills-based agent in the Anthropic Skills sense: each capability (panel schedule extraction, feeder routing, BOM generation, spec analysis) is a discrete skill with its own prompts, tool definitions, and validation logic. Skills are versioned per customer installation.

Underneath, two services do the work. A Rails system of record holds the project model: drawings, annotations, estimation data, exports. A Python inference layer handles the actual AI processing, model orchestration, and structured output parsing. Bob orchestrates between them. When an estimator asks "pull the panel schedules from the electrical drawings," he composes a multi-step workflow against both services and streams results back into the chat.

Each construction trade has its own skill bundle. Electrical is the most mature. Mechanical, plumbing, and steel each have their own quirks and their own validation rules. The skill abstraction lets us deploy domain-specific reasoning without forking the whole agent.

Progressive Results

A panel schedule extraction can return 30 panels with 42 circuits each. A BOM can have hundreds of line items. Dumping all of that into a single Teams message at the end of a 90-second wait is unusable, and it's the wrong interaction model for a conversation.

Bob returns results in chunks. Panel schedules come back as formatted tables, one schedule at a time. BOMs are grouped by category. Each chunk is a separate message in the thread, so the estimator can scan early results while later ones are still processing.

We're still tuning the cadence. Some chunks feel right. Others arrive in a lump at the end while the underlying extraction finishes. The direction is settled; the consistency is not.

Two Ways to Buy

Customers buy the system of record, the agent, or both.

The system of record is the traditional product: upload drawings, get structured data out, export to Excel or your bid software. The agent (Bob) is the conversational layer on top, with usage-based pricing tied to the work it does. Customers who want both get a fully connected stack, where Bob can reference everything in the project model while running his own extractions. Customers who want the agent alone run it as an estimator's chat companion, no upload step, no project database.

We deliberately don't force a customer to adopt our full estimation workflow before they can use the agent. The agent has to earn its place in the existing workflow first. Then the rest of the platform follows, or it doesn't.

What's Hard

A few things that don't fit neatly into the architecture story but matter every day.

Drawing styles vary so widely that no single extraction strategy works on every plan set. Hospital electrical drawings from a national MEP firm look nothing like a tenant-improvement set drawn by a regional EC. Bob has to recognize the style first, then pick the right extraction approach. We're not done with this.

Cross-discipline checks (electrical against mechanical against plumbing on the same floor) are still mostly out of scope. An estimator catches conflicts across trades by walking the drawings. Bob catches them within a trade. The cross-discipline version is a different problem and we haven't shipped it yet.

The conversational interface looks effortless when it works. When the agent gets confused or misroutes a query, the recovery path is awkward. Reset and retry is the current answer. We'd like better.

Why This Matters

The construction industry processes $1.3 trillion in annual U.S. spending, and the estimating workflow hasn't fundamentally changed in decades. You still have a human staring at drawings, counting things, and typing numbers into spreadsheets. The bottleneck isn't extraction. Plenty of tools extract.

The bottleneck is reasoning: cross-referencing sources, flagging discrepancies, deciding what's a real conflict versus a cosmetic one, escalating to the estimator only when escalation is warranted. That's the work. That's where an agent earns the seat.

We're not done. Bob's accuracy on some drawing styles isn't where we want it. The cross-discipline reasoning isn't shipped. The recovery path when the agent misroutes is rough. But the architecture is right, and the deployment pattern (run inside the tool the estimator already has open) is right.

If you're building agents for a skeptical industry, consider that the chat surface is the easy part. The hard part is whether the thing on the other end of the chat actually reasons about the work, or just summarizes it.